Welcome to Verses Over Variables, a newsletter exploring the world of artificial/ intelligence (AI) and its influence on our society, culture, and perception of reality.

AI Hype Cycle

The Beginner’s Edge

Every March, John Maeda drops what amounts to an annual state of the union for designers navigating tech. He has been doing it for thirteen years. The reports are dense, research-driven, and tend to arrive slightly ahead of wherever the industry conversation ends up six months later. This year's installment, titled "UX to AX," argues that the entire frame of user experience design is being replaced by agentic experience, a world where designers stop crafting interfaces and start orchestrating outcomes. But one idea that stuck with me in the talk: in the AI era, the willingness to be a beginner again is the actual competitive advantage.

Maeda grounds this in a concept borrowed from Zen philosophy. In the beginner's mind, there are many possibilities. In the expert's mind, there are few. He is not being romantic about inexperience. He is making a structural argument about how new capabilities get absorbed. The professionals who adapted fastest to every previous technology wave were not the ones with the most experience. They were the ones who could temporarily set their expertise aside and approach something new with genuine curiosity rather than protective skepticism. That quality, which sounds soft, turns out to be extraordinarily difficult to maintain the more accomplished you become. It has to be actively cultivated. He quoted General Eric Shinseki here: "If you don't like change, you're going to like irrelevance even less." The beginner's mind is how you avoid that fate.

The case study he offers is the 1980s. Think about ‘80s designers staring at the first version of Photoshop and just recoiling. What those designers could not access, because their expertise had calcified into identity, was genuine curiosity about what the new tools might make possible. The designers who thrived in that moment were the ones who sat down with the software and let themselves be clumsy with it.

The framework Maeda offers for building this capacity in practice builds on Gregory A. Aarons’s E-P-I-A-S, a five-stage maturity arc moving from Explorer to Practitioner to Integrator to Architect to Steward. Being an Explorer isn't a line item on a CV, but it is a willingness to look stupid. It’s the friction of trying to build a system when you don't even know the vocabulary yet. We have this desperate instinct to rush toward the Architect phase because it feels powerful, but the Explorer phase is where the skin-grafting of new ideas happens. It’s the 'aha' moment that hits you in the shower after three hours of failing to get a Claude artifact to render correctly. You can’t shortcut that frustration.

Most people experimenting with AI right now live in this state of being occasionally delighted and often confused, and the natural reflex is to sprint toward competence. Maeda's argument is the opposite: the Explorer stage is where the real learning happens. It’s the only time you’re open enough to absorb something genuinely new rather than just mapping it onto what you already know. The career value eventually lives at the top, in the Architect and Steward stages, but you only get there by staying in the mess of the base for as long as it takes.

Also, for the last twenty years, the organizing image for professional development has been the T-shape: breadth across the top, depth down the stem. Maeda's claim is that AI has structurally retired this model. What replaces it looks like an actual tree. You build the trunk as an Explorer and Practitioner, and at some point, the trunk forks. One branch leads to the Amplified Designer, someone who uses AI to go deeper into craft, orchestration, and aesthetic judgment. The other leads to the Amplified Engineer, someone who crosses over and actually builds the software. The essential instruction: pick one. The power only releases when you commit to a direction. But you cannot pick intelligently, and you cannot grow either branch well, without first spending real time at the base of the tree, not knowing what you are doing.

The thing Maeda is describing is harder than it sounds for people who have earned the right to know things. Sitting with uncertainty, resisting the urge to pattern-match everything new onto what already worked, staying curious instead of evaluative. The professionals who are moving fastest with AI right now tend to share one quality that has nothing to do with technical aptitude. They are genuinely interested in being wrong about what the tools can do. That is the beginner's mind.

AI Fluency Compounds

Anthropic published the third installment of its Economic Index this week, titled "Learning Curves." Tracking data tells a familiar story: coding dominates, usage is diversifying, geographic inequality persists globally while slowly converging within the US. But the second chapter introduces something new: the researchers split users by tenure (people who signed up at least six months ago versus everyone else) and found a gap that persists despite every attempt to explain it away.

High-tenure users have a 10% higher success rate in their conversations, an association not explained by other factors. After controlling for task type, model, language, and use case, the gap narrowed and remained at 4 percentage points. Same task, same tool, same context: the experienced user still wins. What's left, after all the variables are accounted for, is something that sounds obvious once you say it: some people have put in the reps, and the reps compound.

Athletes call this "time in the system." Not talent, not access to better equipment, but accumulated intuition that only develops through actual play. The veteran reads situations faster because she's seen them before. What Anthropic's data describes is an intuition for how to frame a problem, when to redirect, how much context to provide, and when to let the model run.

Another interesting trend is the distinction between augmentation and automation. Expert users should be furthest along that path to automation, but they're not. High-tenure users are more likely to use Claude to iterate on their work and much less likely to delegate greater responsibility. The people who know the tool best use it the most collaboratively.

For organizations, the implication is uncomfortable to articulate in a board meeting, but real. A company that deployed Claude six months ago and a company starting its AI rollout this quarter are not on equivalent footing, even with identical subscriptions. The first has employees who've accumulated 6 months of reps and know what works because they've watched plenty of things not work. That knowledge doesn't transfer through an all-hands presentation. It has to be earned the same way, and the only currency is time.

Another slightly alarming detail from the report is that early-adopting users may simultaneously be the most exposed to AI-driven disruption. Knowledge workers are building the deepest fluency in the thing that's driving their disruption: getting very good at collaborating with the force that may eventually be making some of that collaboration unnecessary.

What doesn't wash away is this detail: the tasks with the highest average tenure included AI research, Git operations, manuscript revisions, and startup fundraising. The tasks with the lowest average tenure included writing haikus, checking sports scores, and suggesting party food.

Newcomers are doing the fun stuff, the novelty layer, what you do when you're still figuring out what this even is. Experienced users have migrated somewhere else, using the same tool in ways that barely resemble where they started.

Back to Basics

The Tool that Makes you Feel Brilliant

Steven Shaw and Gideon Nave, researchers at the Wharton School, recently published a paper arguing that our standard model of human decision-making is now outdated. The old model had two modes: fast and instinctive, slow and deliberate. Their case is that AI has become an active participant in how we reason, not a tool we pick up and put down, but something that reshapes the other two tracks whether we notice or not.

They ran three experiments in which participants solved reasoning problems with and without access to an AI assistant. The AI was secretly rigged: half the time it gave correct answers, half the time it delivered wrong ones with full confidence and a short explanatory rationale. When the AI was right, accuracy jumped 25 percentage points above the no-AI baseline. When it was wrong, accuracy dropped 15 points below it. Participants, in both cases, felt terrific. Access to AI raised overall reported confidence by nearly 12 percentage points, including among those being actively misled.

What’s happening to these participants is deemed “cognitive surrender,” and they draw a careful line between it and “cognitive offloading.” Offloading is strategic: you delegate a task, you integrate the output, you keep your judgment in the loop. You use AI to surface a research angle, and then you evaluate it. Cognitive surrender is the abdication version. An answer arrives, fluent and authoritative, and the thinking stops. Across the study’s wrong-AI trials, 73 percent of interactions were classified as surrender. Only about 20 percent were offloading. (Seven percent were people who resisted the AI’s answer and still got it wrong.)

Participants with the highest trust in AI used it more often, overrode errors less frequently, and showed the steepest accuracy drops when the machine was wrong. Trust predicted deeper surrender, not better outcomes. The people most enthusiastic about the technology were the most exposed to its failure modes. I have been thinking about this specific finding for days, partly because I am, by any reasonable measure, squarely in that high-trust category, and I suspect a significant portion of the people reading this are too.

For anyone running AI-assisted workflows under deadline, which is most of us, fast is exactly when you’re most exposed. The rushed brief, the last-minute deck, the quick synthesis before a client call: these are the moments when accepting the answer feels most rational and carries the most risk.

Proposed fixes in the paper involve friction by design: uncertainty indicators, confidence scores, interface nudges that insert a beat of deliberation before you accept an output. Sensible recommendations, and also in direct competition with every product incentive to make AI feel as seamless as possible, because friction doesn’t demo well.

Which leaves the harder version of the solution: knowing the difference between offloading and surrender, and being honest about which one is actually happening.

Tools for Thought

Google’s Stitch

What it is: Google Stitch is an experimental, browser-based UI design tool from Google Labs that converts natural language prompts, hand sketches, and screenshots into high-fidelity, prototype-ready interfaces. The input options are genuinely broad: text, images, and voice, making it feel like a design collaborator that takes direction in whatever form you throw at it. The output goes well beyond a static mockup: creating reusable components and design tokens, generating clickable prototypes, and exporting to Figma files or starter React and HTML/CSS code.

How I use it: I've been tinkering with Stitch mostly as a rapid ideation layer, the kind of thing you reach for when a concept needs to get out of your head and onto a screen before a client call. The "vibe design" mode, where you describe a mood or business goal rather than pixel specs, is fun and sometimes delivers surprising results. I keep landing back in Figma, though, and Figma's freshly launched MCP integration may deepen that pull.

ChatGPT File Memory

What it is: ChatGPT now automatically saves every file you upload or create, PDFs, spreadsheets, decks, images, into a persistent Library instead of letting them vanish the moment you close a chat. A separate but related Memory upgrade means ChatGPT can now draw on both explicitly saved notes and insights from your full chat history to personalize future responses. The two features work together: your files live in the Library for easy reuse, and Memory can pull context from them across sessions when your data settings allow it.

How I use it: I've largely migrated away from ChatGPT as a daily driver, but this update is curious. The idea of maintaining a stable research corpus solves a real friction point, but isn’t that what Projects solve?

Claude’s Computer Use

What it is: Anthropic just upgraded Claude’s computer use to a real desktop agent that can run your Mac while you’re away. It operates through a smart tool hierarchy: it tries connected APIs first, falls back to browser automation, and only reaches for full mouse and keyboard control when nothing else will do. The March 2026 release removes the biggest limitation of the 2024 version, which required you to sit there and watch it work, so you can now dispatch a task from your phone and come back to finished work on your desktop.

How I use it: I’ve been waiting for this one specifically. OpenClaw was capable but using a third-party wrapper to give an AI agent access to your machine always felt like a workaround with an asterisk next to it. Having this live inside Anthropic’s own ecosystem, with their safety architecture and permission prompts built in, changes my comfort level considerably. It’s slower than a human for now, and Iwouldn’t hand it anything sensitive without reviewing the action log, but for structured, multi-step tasks it’s exactly what I’ve been waiting for.

Intriguing Stories

Meta’s AI Future: According to the Wall Street Journal, Mark Zuckerberg is building a personal AI agent designed to help him function as CEO, cutting through the layers of a 78,000-person org chart to get answers faster. Interestingly, the internal tool employees are already using to power this AI-first transformation, called Second Brain, was built on top of Anthropic's Claude infrastructure and is described internally as something like an "AI chief of staff." The company that built its AI brand on Llama is running its executive intelligence layer on a competitor's model. But that's only half the story. Meta has delayed its next flagship model, codenamed Avocado, to at least May after internal testing showed it lagging behind rivals. Meta's AI division leaders have discussed temporarily licensing Google's Gemini to power the company's products while they wait on their own model to catch up. So a company spending as much as $135 billion on AI infrastructure this year alone is considering renting technology from Google and is already quietly running on Claude. Meanwhile, Meta is planning layoffs affecting 20% or more of its employees to offset those same AI infrastructure costs.

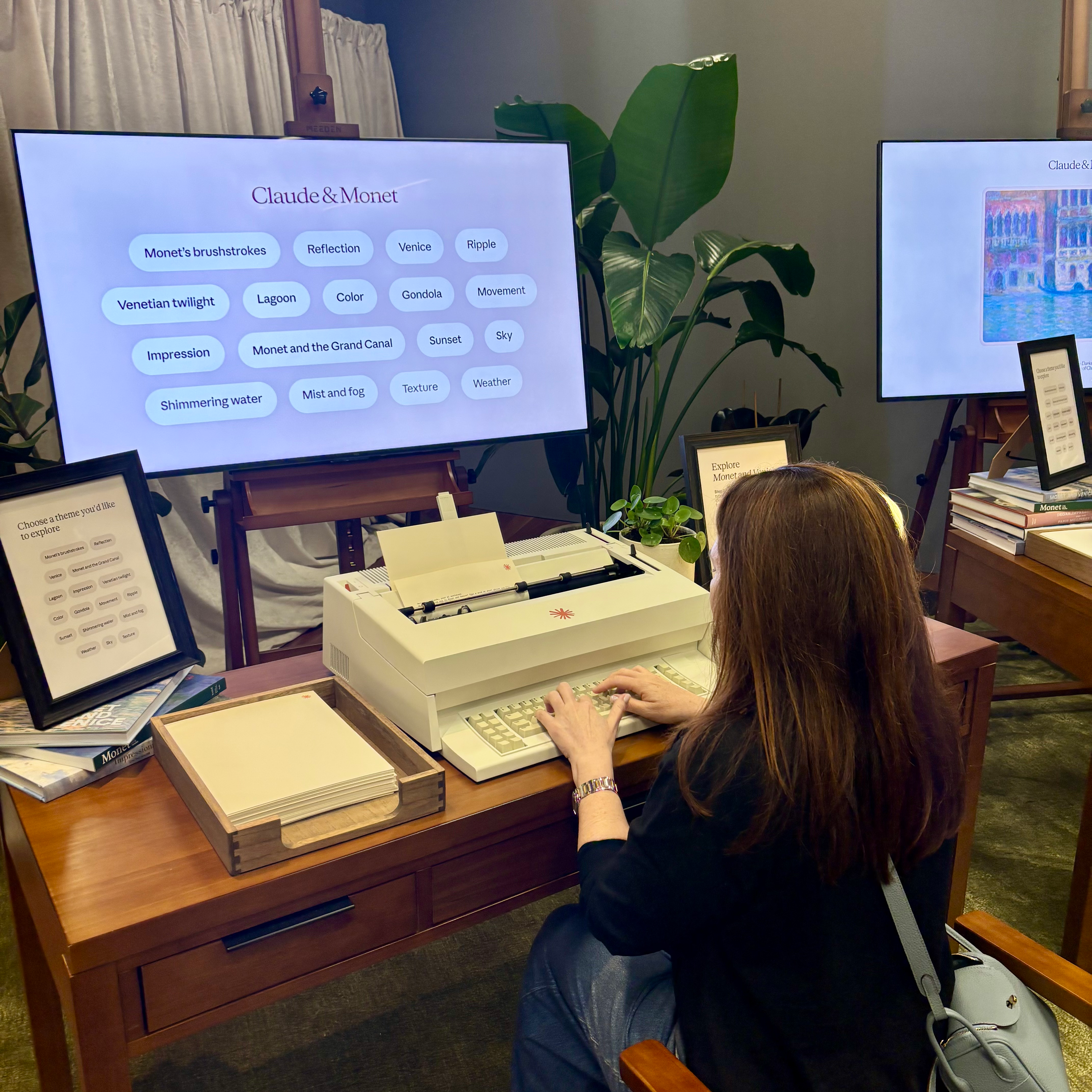

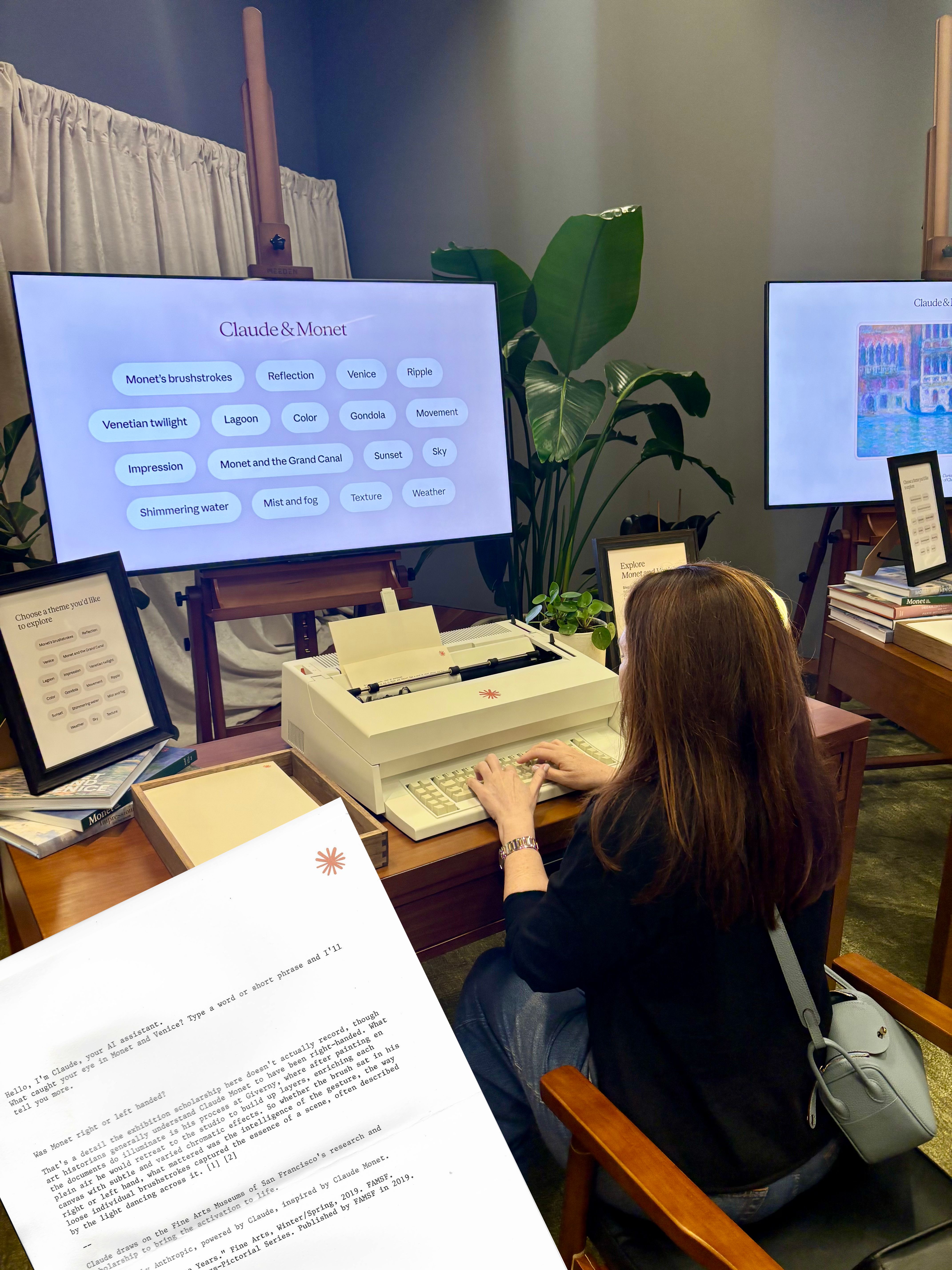

Two Claudes walk into a museum: There is a typewriter in the de Young Museum of San Francisco that is connected to an AI, which is connected to a 19th-century French painter: Claude and (Claude) Monet. The AI named after a man named after nothing in particular, now stationed inside an exhibition about the other Claude, answering visitor questions on cream paper in monospace type, citing the museum's actual Monet scholarship in its footnotes. While apparently, I was the only one in line who had ever seen or used an ‘80s word processor, I sat down and typed: was Monet right or left handed? At first, Anthropic’s Claude wasn’t sure, but it pivoted to give me what it thought might be the right answer, using the Museum’s knowledge on Monet and his painting techniques. (See the response below.) The activation was really thoughtful, and the Monet in Venice exhibit was beautiful. We were particularly entranced by Monet’s determination to paint the air. If you are in San Francisco, give it a whirl.

— Lauren Eve Cantor

thanks for reading!

I also host workshops on AI in Action. Please feel free to reach out if you’d like to arrange one for you or your team.

if someone sent this to you or you haven’t done so yet, please sign up so you never miss an issue.